Blogs

Mastering 3 Industrial Depth Camera Accuracy Standards

Mastering 3 industrial depth camera accuracy standards

Unlock peak robotic precision. Learn how mastering industrial depth camera accuracy optimizes autonomous navigation in complex warehouses. Contact us for OEM today!

1. Z-accuracy and RMS error in stereo vision systems

Look, in a busy warehouse, industrial depth camera accuracy is everything. It is the thin line between a robot smoothly picking a box and a mechanical collision that shuts down a line for two hours. I have seen too many engineers try to save a few bucks with hobbyist sensors, only to regret it when the “Z-accuracy” drifts by 10mm in a week.

Z-accuracy is the actual distance versus what the camera reports. If your autonomous mobile robot (AMR) thinks a pallet is 2 meters away but it’s actually 2.005 meters, that 5mm error can cause a failed grasp. In the world of stereo vision systems, we measure this noise using Root Mean Square (RMS) error. If your RMS is high, your point cloud will look like a vibrating mess, making precision impossible.

What’s more, the physical baseline—the distance between the two lenses—dictates your long-range industrial depth camera accuracy. A wider baseline is great for distance, but it makes the sensor bulky. For close-up work, you need a narrow baseline to eliminate the “dead zone” right in front of the lens. Check out how different baselines stack up in this 3D Camera Survey — ROS-Industrial.

| Metric | Consumer-Grade Sensor | Industrial-Grade Sensor |

|---|---|---|

| RMS Error (at 2m) | > 15mm (High Jitter) | < 3mm (Stable Data) |

| Z-Drift (Thermal) | Significant after 1 hour | Active Compensation Included |

| Fill Rate (Low Light) | < 70% | > 95% (Active IR) |

2. Spatial mapping technology and planar fit RMS

It’s not just about distance; it’s about how the camera sees the whole room. High-end spatial mapping technology allows a robot to build a “digital twin” of the floor. If the industrial depth camera accuracy is off, the robot might “miss” a thin cable or a small piece of debris. This is where we look at Planar Fit Accuracy—how flat a flat wall actually looks in the data.

To be honest, the biggest killer of precision is “Z-Drift.” This happens when the camera gets hot. The internal components expand, the lenses shift by microns, and suddenly your industrial depth camera accuracy is out the window. If you are doing serious bin-picking, you need a depth sensing module built with a thermally stable chassis.

For projects that can’t afford a single millimeter of drift, I usually recommend the P008G Global Shutter Stereo Camera. This unit is a tank when it comes to spatial consistency and resisting thermal expansion.

Here’s the kicker: in GPS-denied environments, your SLAM (Simultaneous Localization and Mapping) algorithm relies entirely on the density of your point cloud. If your industrial depth camera accuracy drops, the robot starts to “drift” in its own map. It thinks it is in the hallway when it is actually about to hit a charging station.

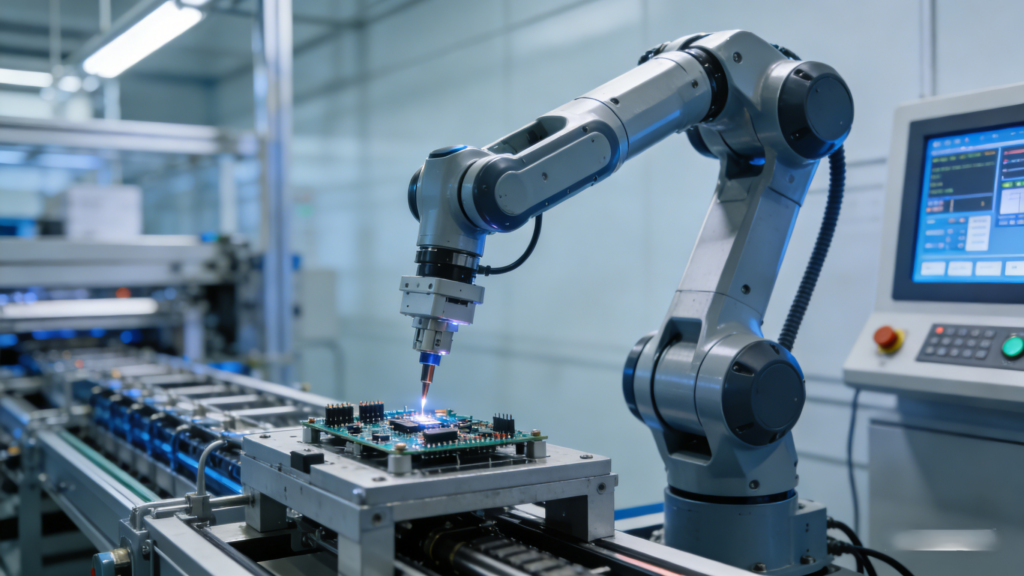

3. Dynamic obstacle avoidance sensor reliability

Static accuracy is easy. Keeping that industrial depth camera accuracy high while the robot is moving at 2 meters per second? That’s the real challenge. Most cheap cameras use rolling shutters, which create “Motion Artifacts.” This makes straight poles look like jelly, triggering the obstacle avoidance sensor to hit the emergency brake for no reason. This kills your warehouse throughput.

Global shutter technology is the only way to go here. It snaps the whole frame at once, ensuring every pixel of your depth sensing module is perfectly synced. No jelly effect, no false positives. Just clean, actionable data for your navigation stack.

To better understand industrial depth camera accuracy in tough environments, this video tutorial is highly recommended:

Bottom line: you also need to worry about “Fill Rate.” If you’re looking at a plain white wall, many stereo vision systems “go blind” because there’s no texture to lock onto. Industrial-grade modules use active IR projectors to “spray” an invisible pattern on the wall, ensuring the obstacle avoidance sensor always has a solid depth map to work with.

Environmental variables and depth sensing module calibration

Industrial floors are rarely perfect. You’ve got flickering LED lights, sunlight streaming through skylights, and massive temperature swings. High-intensity ambient light can wash out a sensor. That’s why we use narrow-band pass filters to make sure the camera only sees its own IR light. You can read more about handling these environments in the Ruggedized / Industrial Stereo Depth – RealSense guide.

I always tell clients that factory calibration is just the starting point. Shipping a robot across the country in a vibrating truck can knock things out of alignment. That is why we built self-calibration logic into the Viobot2 Autonomous Navigation Module. It lets the robot “fix” its own eyes on the fly, maintaining top-tier industrial depth camera accuracy without human intervention.

Here’s a tip: for outdoor use or uncooled warehouses, look for modules with internal thermal sensors. These sensors recalibrate the math in real-time as the hardware heats up. Without this, your 1mm precision turns into 10mm error as soon as the sun hits the roof in the afternoon.

Implementing accuracy standards in OEM/ODM projects

When we help B2B clients with OEM projects, we don’t just pick the most expensive sensor. We balance FOV (Field of View) and resolution. Do you need a wide FOV for docking or a narrow FOV for long-range detection? Customizing these parameters is the secret to maximizing industrial depth camera accuracy where it actually matters for your specific use case.

We also use a trick called “Sub-pixel Interpolation.” It’s a software technique that calculates depth at a higher resolution than the physical pixels. It’s how we get sub-millimeter edge detection out of standard hardware. When paired with high-performance Edge AI, you get low latency and high industrial depth camera accuracy simultaneously.

Frequently asked questions (FAQ)

How to calibrate industrial depth cameras for precision?

Calibration involves using high-contrast patterns and software to define the “intrinsic” and “extrinsic” math of the lenses. For the best industrial depth camera accuracy, use self-calibration software that runs during operation to account for bumps and vibrations.

Why does depth accuracy fluctuate in variable lighting?

Most stereo vision systems need features to “see.” Glare or shadows hide these features. Using a depth sensing module with an active IR projector solves this by creating its own texture, regardless of the lighting conditions.

What is the best sensor for robotic arms?

For arms, you want a short-range, high-resolution module with a high fill rate. This ensures the gripper can see small parts with sub-millimeter industrial depth camera accuracy even in cluttered or messy bins.

Does temperature affect industrial depth camera accuracy?

Absolutely. Heat causes the camera housing to expand. Professional-grade cameras include thermal compensation logic to adjust the measurements as the temperature changes, preventing “Z-drift.”

Conclusion and CTA

Long story short: mastering industrial depth camera accuracy is a balancing act between physics and software. Whether you are moving a 1-ton AGV or a tiny robotic gripper, the standards of Z-accuracy and spatial mapping are your foundation. I’ve seen companies cut corners with consumer gear, only to come back to industrial solutions after their first big system failure.

Don’t let poor sensor data bottleneck your automation. MRP Solutions specializes in high-end OEM/ODM services for stereo vision systems and autonomous navigation. We know the ins and outs of industrial depth camera accuracy because we build this hardware from the ground up to survive the real world.

Contact our engineering team today for a customized consultation and technical datasheet. Let’s get your robotic fleet running with surgical precision.

Image by: Tima Miroshnichenko

https://www.pexels.com/@tima-miroshnichenko